Mass Surveillance. Autonomous Weapons. The Pentagon Wants Both. This AI Company Says No.

The US government just gave Anthropic until Friday to hand over its ethics... or else.

I know I only just sent out a newsletter, but this is important.

I’m not sure if you’ve all been following this, but something huge is happening right now: the U.S. government just gave an AI company until end of day Friday to hand over its ethics, or else.

The short version:

Anthropic, the company behind the AI chatbot Claude, is in a full-blown standoff with the U.S. Department of Defense (now rebranded the “Department of War”). As of today, they have until end of day Friday to back down or face serious retaliation.

Here’s the backstory.

Last summer, the Pentagon awarded contracts up to $200 million to four AI labs: Anthropic, OpenAI, Google, and Elon Musk’s xAI. The plan is to develop AI capabilities for military use. Already terrifying, but let’s be honest, inevitable.

Anthropic was the first AI company approved for the Pentagon’s classified networks, and until recently it was the only frontier AI provider with that kind of classified access.

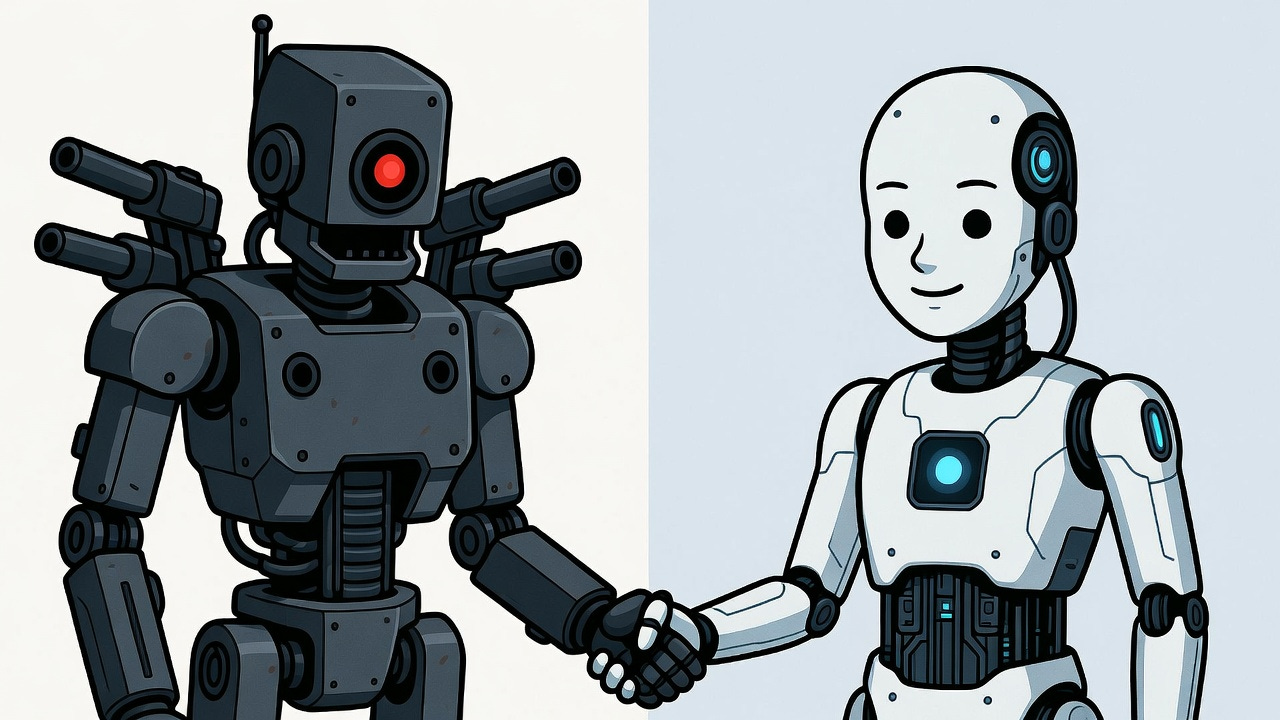

But in negotiating contracts, Anthropic has now drawn two hard lines:

No using Anthropic’s tools as an engine for mass surveillance, ie processing and analyzing data on Americans at scale

No fully autonomous weapons where AI makes final targeting decisions without a human in the loop, ie no operations where AI chooses and executes lethal targets without human oversight.

These seem pretty reasonable. No letting AI autonomously decide to kill people. No building a digital panopticon to surveil your own citizens. Constitutional stuff, arguably.

The Pentagon’s response: absolutely not.

Defense Secretary Pete Hegseth has pushed back, saying all AI vendors must allow their systems to be used for “all lawful purposes,” including warfare, intelligence, and potentially surveillance. Anthropic’s safety restrictions have been labeled “woke AI” by Hegseth and other Trump administration officials.

Meanwhile, OpenAI removed its explicit ban on military applications in January 2024. Google, which had promised after the Project Maven backlash not to build AI for weapons or mass surveillance, dropped that pledge from its AI Principles in February 2025. xAI has moved quickly to expand its Pentagon footprint, landing a major DoD contract and pushing Grok into the department’s internal AI rollout.

That leaves Anthropic, and we’re yet to see what they’ll do.

Today, Hegseth met with Anthropic CEO Dario Amodei directly and delivered an ultimatum: lift the restrictions or face consequences. Anthropic has until Friday.

If they don’t comply, there are three possible outcomes:

Cancel Anthropic’s existing and pending contracts, worth up to $200 million.

Label Anthropic a “supply chain risk”, a designation typically reserved for adversarial foreign suppliers. This could effectively bar all Pentagon contractors and vendors from using Claude, turning the entire defense industrial base into an enforcement mechanism.

Invoke the Defense Production Act, which is a Cold War-era law that would amount to using emergency wartime powers to conscript Anthropic’s AI into military service against the company’s will, forcing them to hand over access to their models whether they consent or not.

Like, wow.

That middle one, according to Dean Ball, a former White House AI policy advisor, “would basically be the government saying, ‘If you disagree with us politically, we’re going to try to put you out of business.’”

The way the military is spinning this is to say it’s “not democratic” to let a single company impose rules above what Congress and existing law already allow.

Sorry Anthropic, how undemocratic of you to refuse to let your product be used for mass surveillance of Americans or for killing people without human oversight.

As of today’s meeting, Anthropic has no plans to budge.

Why this matters:

First of all, let’s be clear: the government already has access to your personal chats in the LLMs. Basically all of the major platforms automatically flag content and feed it to law enforcement. So the fact that AI chatbots are insanely personal (we treat them like our doctor, lawyer, nutritionist, therapist, life coach) and the government is already getting access to these intimate conversations is not what’s being debated here. We already lost that battle.

This is about whether these AI tools are allowed to be deployed to help process the enormous amounts of data being vacuumed up by governments right now. The military is saying they want this tool deployed with no restrictions on surveillance or autonomous lethal force. That’s what “all lawful purposes” actually means in practice.

The resolution of this standoff will likely set precedents for the entire industry. If Anthropic holds the line, other AI companies might demand similar protections. If the Pentagon walks away and gives the contracts to companies that already said yes, the message to every AI lab going forward is simple: having ethics will cost you.

The key takeaway:

This is a fight over who gets to decide what the most powerful AI tools in the world are allowed to do. Right now, one company is drawing a line and paying a real price for it. That’s not to say Anthropic is perfect -- they’re absolutely not, and have also been responsible for some terrible lobbying around AI. But I do give them credit for wanting the DoD to clarify what they plan to use AI for, and establishing boundaries around that, as it has really helped paint a picture for all of us. That the government’s response is threats, ultimatums, and invoking emergency wartime powers against a private tech company tells you everything about how seriously they want those boundaries erased.

So yeah, if I have to use one of these major platforms and I get to choose between one that is enabling an ai-powered killing machine with dystopian panopticon capabilities, or one that is mildly pushing back, I’m probably going to vote with my money for the world I want to see.

In fact, even better, I can take my money to the ai tools that are also trying to protect my privacy, like Brave’s Leo, Venice.ai. Confer.to, and many others, not those involved in DoD contracts at all.

Yours In Privacy,

Naomi

Consider supporting our nonprofit so that we can fund more research into the surveillance baked into our everyday tech. We want to educate as many people as possible about what’s going on, and help write a better future. Visit LudlowInstitute.org/donate to set up a monthly, tax-deductible donation.

NBTV. Because Privacy Matters.

It's been pretty interesting seeing Anthropix stand up here and I wonder what's going to happen by Friday.

then theres stuff like this, going out to mass legacy media across the country, assuring us how fantastic it will be to do mass ai surveillance on us all, all the time. ive seen it on multiple stations across several days. ‘dont worry about privacy concerns, buildings are pixelated’. and the head honchos have cell phone apps with real time capabilities. they lead with “ai” but high-mounted 360° cameras are embedded with the tech. all for our own good of course

https://kfor.com/news/local/oklahoma-firefighters-using-ai-to-detect-wildfires/